Resource Bottlenecks Don't Scale March 19, 2014

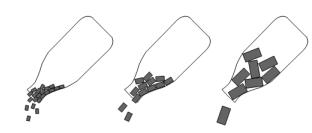

Whenever we talk about bottlenecks in the context of software performance optimization, the following image often comes to mind:

In fact, we also love using the above illustration to explain why memory hogs such as Wordpress offer marginal throughput. Each brick in the bottle represents a web request. The larger the brick, the more memory it consumes. The total memory of the server is the bottleneck that limits the number of concurrent requests.

The problem with the above mental model is that it leads us to believe that resource bottlenecks are scalable. In other words, there is a linear co-relation between performance and hardware resource.

Recently we optimized a video rendering pipeline. With tuned down effects and resolution, a draft took over 50 hours to render on a i5 laptop; after the optimization, the footage is generated in just 20 minutes, with full effects on. What made the day-and-night difference is the use of compressed footage. If each layer is compressed before composition, it would take much less memory but it would be more CPU intense. Counter-intuitively, however, compressed footage significantly sped up the process because more layers could fit in memory. This revelation made us explain the effect of bottleneck quite differently:

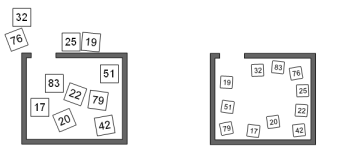

Now imagine you are tasked to sort the 11 boxes in a room, as shown in the above diagram. You can only sort what you see and you can only arrange the boxes inside the room. When the boxes are too big and cannot all fit in the room, you'll have to move a box out in order to bring a new one in. This is a tedious process. If the boxes are small enough so that they all fit in the same room, sorting is straightforward.

In our video optimization example, the key to faster rendering is not just fitting more footage in memory, but All of them. Just like us human, the machine needs to know all its options before making a decision.

The same principle applies to many aspects of web development. Understanding that resource bottlenecks are not scalable allows us to focus more on resource-efficient designs.

For example, we don't use over generalized database normalization such as Magento's EAV architecture because it would otherwise incur many unnecessary joints. In relationship databases, join operations connects multiple tables, and if these tables fail to fit in memory, the database uses temporary files that are analogous to the boxes outside of the room.

Another example is with networking. A simple web request can fit in a single packet. When this packet arrives at the server, the entire request is read in full, and a response is sent. If the request spans multiple packets, however, the server may not see all the packets at the same time or in the correct order. Yes this is exactly like sorting the boxes. If one packet is lost in transmission, the server will eventually give up and the entire packet stream has to be re-transmitted. It is possible that the re-transmitted request also has a never-arriving packet, causing an infinite resend loop. The packets can be intentionally scrambled and omitted to increase server load - this is actually a form of Denial of Service (DOS) attack.

What does this mean to non-technical folks such as end users and end buyers? Well, when we point out that certain software is sloppy on resource, please take the advice with far more gravity. There are certain performance issues that cannot be resolved by simply adding more hardware. What consumes twice as much memory doesn't just half your throughput; it may disable your service altogether.

For the record, our websites and full fledged management applications typically take up to 1/40th of a freshly installed Wordpress website, and with server optimization the ratio can be further improved to about 1:500.

In fact, we also love using the above illustration to explain why memory hogs such as Wordpress offer marginal throughput. Each brick in the bottle represents a web request. The larger the brick, the more memory it consumes. The total memory of the server is the bottleneck that limits the number of concurrent requests.

The problem with the above mental model is that it leads us to believe that resource bottlenecks are scalable. In other words, there is a linear co-relation between performance and hardware resource.

Recently we optimized a video rendering pipeline. With tuned down effects and resolution, a draft took over 50 hours to render on a i5 laptop; after the optimization, the footage is generated in just 20 minutes, with full effects on. What made the day-and-night difference is the use of compressed footage. If each layer is compressed before composition, it would take much less memory but it would be more CPU intense. Counter-intuitively, however, compressed footage significantly sped up the process because more layers could fit in memory. This revelation made us explain the effect of bottleneck quite differently:

Now imagine you are tasked to sort the 11 boxes in a room, as shown in the above diagram. You can only sort what you see and you can only arrange the boxes inside the room. When the boxes are too big and cannot all fit in the room, you'll have to move a box out in order to bring a new one in. This is a tedious process. If the boxes are small enough so that they all fit in the same room, sorting is straightforward.

In our video optimization example, the key to faster rendering is not just fitting more footage in memory, but All of them. Just like us human, the machine needs to know all its options before making a decision.

The same principle applies to many aspects of web development. Understanding that resource bottlenecks are not scalable allows us to focus more on resource-efficient designs.

For example, we don't use over generalized database normalization such as Magento's EAV architecture because it would otherwise incur many unnecessary joints. In relationship databases, join operations connects multiple tables, and if these tables fail to fit in memory, the database uses temporary files that are analogous to the boxes outside of the room.

Another example is with networking. A simple web request can fit in a single packet. When this packet arrives at the server, the entire request is read in full, and a response is sent. If the request spans multiple packets, however, the server may not see all the packets at the same time or in the correct order. Yes this is exactly like sorting the boxes. If one packet is lost in transmission, the server will eventually give up and the entire packet stream has to be re-transmitted. It is possible that the re-transmitted request also has a never-arriving packet, causing an infinite resend loop. The packets can be intentionally scrambled and omitted to increase server load - this is actually a form of Denial of Service (DOS) attack.

What does this mean to non-technical folks such as end users and end buyers? Well, when we point out that certain software is sloppy on resource, please take the advice with far more gravity. There are certain performance issues that cannot be resolved by simply adding more hardware. What consumes twice as much memory doesn't just half your throughput; it may disable your service altogether.

For the record, our websites and full fledged management applications typically take up to 1/40th of a freshly installed Wordpress website, and with server optimization the ratio can be further improved to about 1:500.